NVIDIA H100 Tensor Core GPU

An Order-of-Magnitude Leap for Accelerated Computing

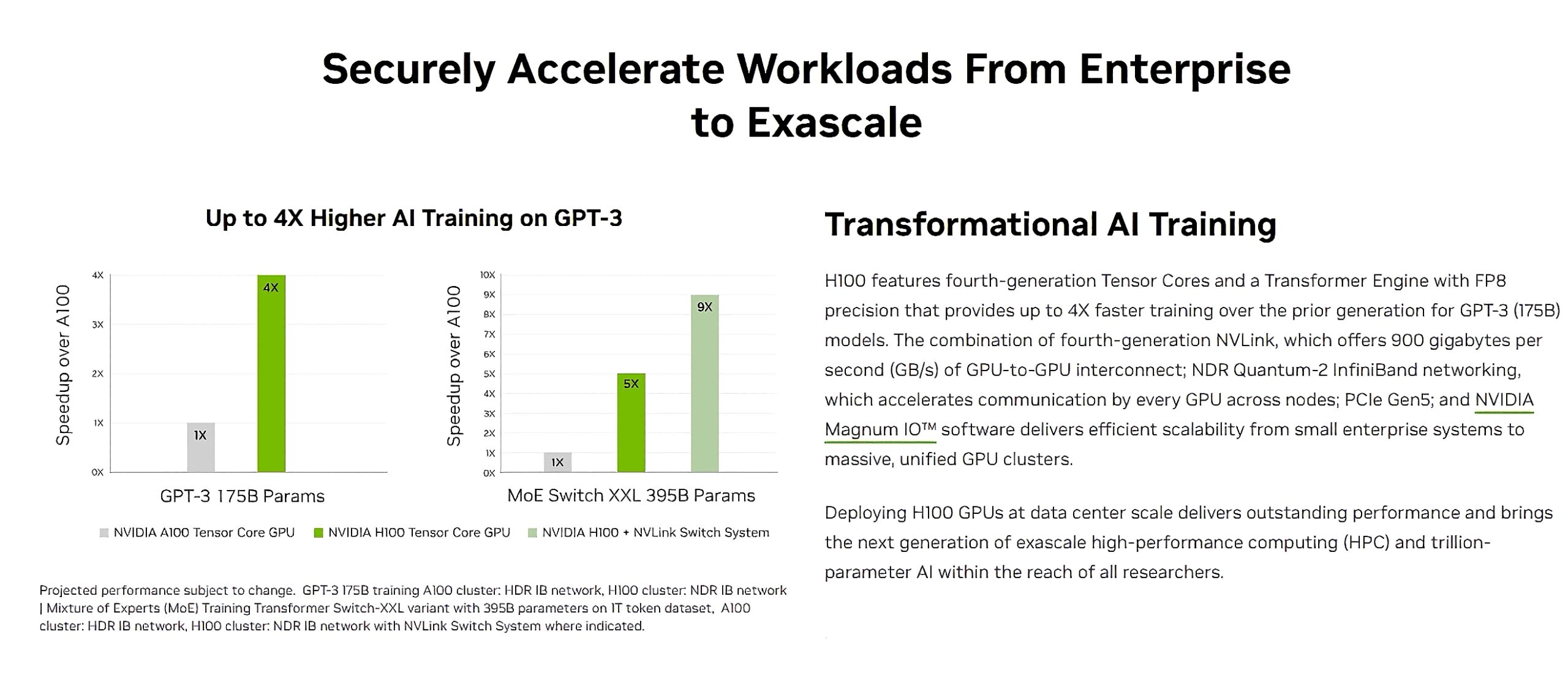

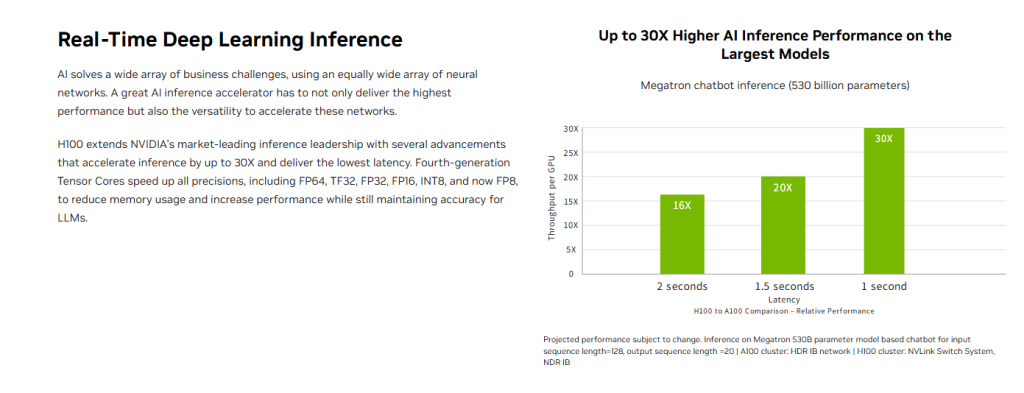

The NVIDIA H100 Tensor Core GPU delivers exceptional performance, scalability, and security for every workload. H100 uses breakthrough innovations based on the NVIDIA Hopper™ architecture to deliver industry-leading conversational AI, speeding up large language models (LLMs) by 30X. H100 also includes a dedicated Transformer Engine to solve trillion-parameter language models.

Supercharge Large Language Model Inference With H100 NVL

For LLMs up to 70 billion parameters (Llama 2 70B), the PCIe-based NVIDIA H100 NVL with NVLink bridge utilizes Transformer Engine, NVLink, and 188GB HBM3 memory to provide optimum performance and easy scaling across any data center, bringing LLMs to the mainstream. Servers equipped with H100 NVL GPUs increase Llama 2 70B performance up to 5X over NVIDIA A100 systems while maintaining low latency in power-constrained data center environments.